I Let an AI Build My App. Two Years Later, I Asked Another AI to Fix It.

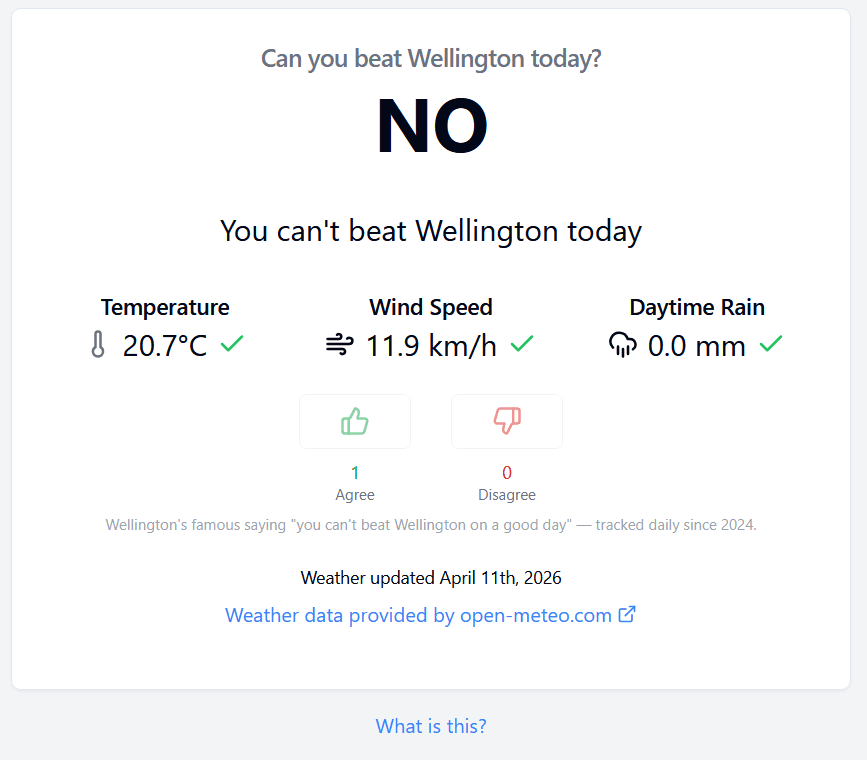

Back in 2024 I built Can You Beat Wellington, a tiny hobby app with one job: check whether Wellington is having a genuinely good day (≥18°C, wind under 20 km/h, no rain) and let people vote on whether they agree. Simple concept. Maybe a few hundred lines of real logic.

I built it using Lovable (which was called GPT Engineer at the time). Describe what you want, iterate in chat, get a deployed React + Supabase app. Within an afternoon I had something live. It was genuinely impressive.

Then I mostly left it alone. A few small tweaks here and there, but nothing serious. It just ran.

Fast forward to April 2026. I decided to actually look at what Lovable had generated, and asked Claude to do a proper review and refactor. What followed was a pretty educational few sessions, not because the app was broken, but because of the gap between "works fine" and "is actually well built."

What Lovable shipped

The first commit, made by gpt-engineer-app[bot], included 62 npm packages, 48 shadcn/ui component files, and 25 Radix UI packages. For an app that shows three weather numbers and two voting buttons.

Libraries like framer-motion, zod, and react-hook-form were installed and never used. shadcn/ui is a copy-paste component library where you're meant to add only what you need. Lovable added all of them. 44 of those 48 component files were never imported anywhere in the codebase.

This is just how these tools work. They don't know what you'll need, so they install everything. It's your job to figure out what to keep.

The security stuff

This is the part I'd flag most urgently if you've got a Lovable app running that you haven't looked at closely.

Credentials in source. Lovable generates the Supabase client with credentials hardcoded directly in the file. My project is a public GitHub repo. That anon key was committed on day one and sat there for months. The Supabase anon key is designed to be "safe to expose", but only if Row Level Security is configured, which leads to...

No RLS. The Supabase tables had no Row Level Security policies. Technically, anyone with the anon key could SELECT, INSERT, UPDATE, or DELETE any row directly. For a hobby app with no real stakes this was low risk, but it's the kind of thing that earns you security warning emails from Supabase. We added proper RLS policies and routed vote mutations through a SECURITY DEFINER function so the DB itself enforces the constraints.

A leftover dev page with an API key. There was a CSVGen page, a one-off data seeding tool from development, deployed but never linked in the nav. It had a weather API key hardcoded in the component source. Not a huge deal for a free-tier API, but it illustrates the pattern: Lovable generates whatever you ask for during development, and those pages don't go away on their own.

Placeholder analytics. At some point I'd asked for Google Analytics to be added. The generated code used GA_MEASUREMENT_ID as the tracking ID, a placeholder that was never replaced with a real value. The analytics code was loading and making network calls to Google, and tracking absolutely nothing. For months. Classic AI-generated code: structurally correct, functionally inert.

No security headers. No CSP, no X-Frame-Options, nothing. securityheaders.com gave it a D. We added the full set to vercel.json. Getting the CSP right took a few iterations (a YouTube embed and recharts both needed special handling) but it's now properly locked down and scoring an A.

The performance stuff

The main JS bundle was 877 kB. For a weather checker.

That's recharts and react-day-picker being loaded synchronously on every page, even though they're only used on the About page. Claude replaced recharts with a custom SVG line chart (about 80 lines, no dependencies) and react-day-picker with a custom CSS grid calendar (about 120 lines, no dependencies), then lazy-loaded the entire About page with React.lazy().

Result: 877 kB to 80 kB on the main bundle.

The lesson here is that AI tools optimise for "works," not "fast." They reach for well-known libraries because that's what's in their training data. A developer would ask "do I really need recharts for one line chart?" The generator doesn't ask that question.

Killing the 44 unused shadcn/ui components and their Radix packages took the CSS bundle from 45 kB to 19 kB.

The infrastructure stuff

No CI pipeline, no staging environment, no automated dependency updates. Every change went straight to production. npm audit showed 13 vulnerabilities when we first ran it, including a ReDoS vulnerability in minimatch.

None of this is surprising. Lovable deploys fast by skipping all of it. But it means you're inheriting a project with none of the scaffolding a real codebase needs.

We added a GitHub Actions pipeline (lint, test, build, audit on every push), a staging branch that auto-deploys to a Vercel preview URL, and Dependabot for weekly dependency updates. Also worth noting: Supabase pauses free-tier projects after a week of inactivity, so there's now a GitHub Actions cron job that pings the database every 5 days to keep it alive.

The architecture stuff

A few things in the code itself that were worth cleaning up.

is_good_day was stored as a computed boolean in every database row. That's fine until you want to change the rules, at which point you need a migration to update every historical row. We removed it and compute it at runtime instead. Rule changes now apply retroactively to all history for free.

The "is this a good day?" logic was duplicated across four different components. Once we extracted it to a single rulesStorage.js, it was straightforward to write proper unit tests for it, which immediately caught two boundary bugs that had been ambiguous in the original inline logic. Wind at exactly 20 km/h should fail; rain at exactly 0 should pass. Both had been handled inconsistently.

There were zero tests before the refactor. There are 32 now.

The numbers

| Metric | Before | After |

|---|---|---|

| npm dependencies | 62 | 30 |

| shadcn/ui component files | 48 | 4 |

| Main JS bundle | 877 kB | 80 kB |

| CSS bundle | 45 kB | 19 kB |

npm audit vulnerabilities |

13 | 0 |

| Security headers score | D | A |

| Unit tests | 0 | 32 |

| CI pipeline | None | Lint, test, build, audit |

| Staging environment | None | Yes |

| Automated dependency updates | None | Dependabot (weekly) |

Next up for CYBW

The current ruleset for Can you Beat Wellington is static throughout the year. The 18 degree cut off means that we will only get good days during summer, and the data refelcts that. On my to-do list is to look at the historical data set (I have 6 years loaded in there now) and come up with a ruleset that accounts for seasonal changes.

What to take from this

Lovable is genuinely good at what it does. It got a working, deployed app with a real backend out of an afternoon's work. The component structure was sensible, the Supabase integration worked, the UI was... okay.

But it's a prototyping tool, not an engineering team. It installs everything, cleans up nothing, and doesn't set up any of the infrastructure that makes a codebase maintainable over time. That's fine if you know it going in. Just budget some time after the initial build to do the things it skipped.

If you've got a Lovable app running that you haven't looked at closely, the things I'd check first: are credentials hardcoded in source, is RLS configured, are there any leftover dev pages with API keys in them, and what does npm audit say? The answers might be more interesting than you'd expect.

The code is open source if you want to poke around: github.com/patatrat/CanYouBeatWellington

Replies

Reply on Mastodon →Loading replies…